News

Huawei launched new AI Storage for large scale model

Today, Huawei launched new AI storage products for the large-scale model industry. These new products provide optimal storage solutions for various types of model training. Including basic model training, industry model training, segmented scene model training, and reasoning.

Huawei also shared four challenges of the process of developing and implementing large-scale model applications. Let’s take a look at these.

- First, the data preparation time is long, the data sources are scattered, and the collection is slow. It takes about 10 days to preprocess 100 TB of data.

- Second, the multi-modal large model uses massive text and pictures as training sets, and the current loading speed of massive small files is less than 100MB/s, the loading efficiency of the training set is low.

- Third, the parameters of the large model are frequently adjusted, the training platform is unstable, the training is interrupted once every two days on average, the checkpoint mechanism is required to resume the training, and the failure recovery takes more than one day.

- Fourth, the large model The implementation threshold is high, the system construction is complicated, resource scheduling is difficult, and the utilization rate of GPU resources is usually less than 40%.

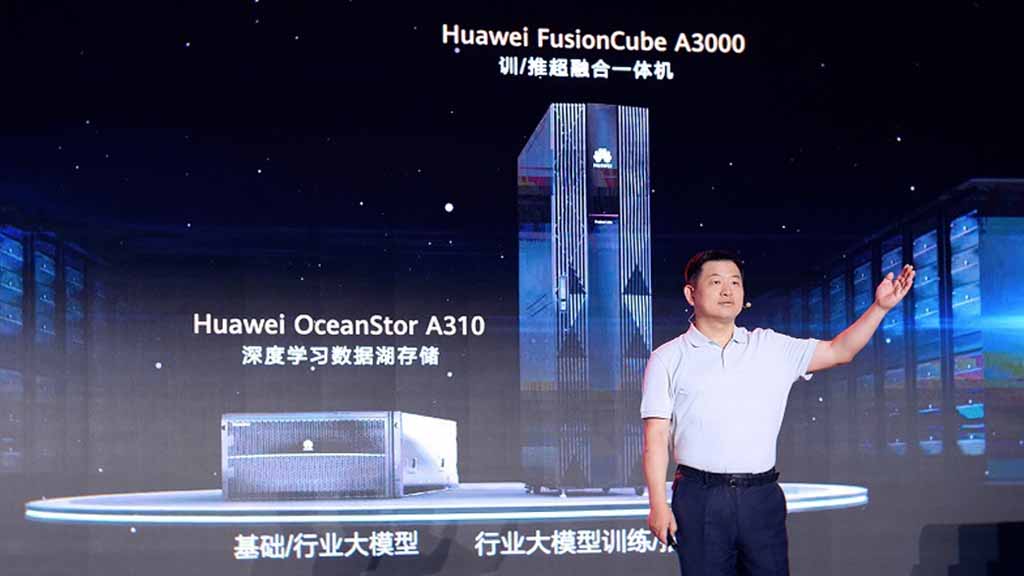

To address these issues, Huawei launched the OceanStor A310 deep learning data lake storage for AI . the FusionCube A3000 training/super-integration all-in-one machine for large-scale model applications in different industries and scenarios.

OceanStor A310

OceanStor A310 deep learning data lake storage, oriented to basic/industry large model data lake scenarios, realizes massive data management in the whole process of AI from data collection, and preprocessing to model training and inference application.

The OceanStor A310 single-frame 5U supports the industry’s highest bandwidth of 400GB/s and the highest performance of 12 million IOPS and can be linearly expanded to 4096 nodes to achieve multi-protocol lossless intercommunication.

The global file system (GFS) realizes cross-regional intelligent data weaving and simplifies the data collection process; realizes near-data preprocessing through near-memory computing, reduces data migration and improves preprocessing efficiency by 30%.

FusionCube A3000

FusionCube A3000 training/push hyper-integrated all-in-one machine, oriented to large-scale industry model training/inference scenarios, and for tens of billions of model applications, integrates OceanStor A300 high-performance storage nodes, training/push nodes, switching devices, AI platform software, and management and operation software, to provide large-scale model partners with a check-in deployment experience and realize one-stop delivery.

Works out of the box and can be deployed within 2 hours. Both training/push nodes and storage nodes can be independently scaled horizontally to match model requirements of different scales.

At the same time, the FusionCube A3000 uses high-performance containers to share GPUs for multiple model training and inference tasks, increasing resource utilization from 40% to over 70%. FusionCube A3000 supports two flexible business models, including Huawei Ascend’s one-stop solution, and a third-party partner’s one-stop solution for open computing, network, and AI platform software.

Zhou Yuefeng, president of Huawei’s data storage product line, said: “In the era of large-scale models, data determines the height of AI intelligence. As the carrier of data, data storage has become a key infrastructure for AI large-scale models. Huawei’s data storage will continue to innovate in the future, facing the era of AI large-scale models Provide a variety of solutions and products, and work with partners to promote AI empowerment in various industries.”